- Python Unittest Test Runner

- Python Unittest Run One Test

- Python Unittest Test Runner

- Python Unittest Run Specific Test

- Python Unit Test Runner Gui

Tutorial

Project: pywren-ibm-cloud Author: pywren File: tests.py License: Apache License 2.0. Let's run the above script. You would run the unittest module as a script by specifying -m while running it.!python -m unittest testvolumecuboid.py. Ran 1 test in 0.000s OK Great! So you got your first unit test code working.

The author selected the COVID-19 Relief Fund to receive a donation as part of the Write for DOnations program.

Introduction

The Python standard library includes the unittest module to help you write and run tests for your Python code.

Tests written using the unittest module can help you find bugs in your programs, and prevent regressions from occurring as you change your code over time. Teams adhering to test-driven development may find unittest useful to ensure all authored code has a corresponding set of tests.

In this tutorial, you will use Python’s unittest module to write a test for a function.

Prerequisites

To get the most out of this tutorial, you’ll need:

- An understanding of functions in Python. You can review the How To Define Functions in Python 3 tutorial, which is part of the How To Code in Python 3 series.

Defining a TestCase Subclass

One of the most important classes provided by the unittest module is named TestCase. TestCase provides the general scaffolding for testing our functions. Let’s consider an example:

First we import unittest to make the module available to our code. We then define the function we want to test—here it is add_fish_to_aquarium.

In this case our add_fish_to_aquarium function accepts a list of fish named fish_list, and raises an error if fish_list has more than 10 elements. The function then returns a dictionary mapping the name of a fish tank 'tank_a' to the given fish_list.

A class named TestAddFishToAquarium is defined as a subclass of unittest.TestCase. A method named test_add_fish_to_aquarium_success is defined on TestAddFishToAquarium. test_add_fish_to_aquarium_success calls the add_fish_to_aquarium function with a specific input and verifies that the actual returned value matches the value we’d expect to be returned.

Now that we’ve defined a TestCase subclass with a test, let’s review how we can execute that test.

Executing a TestCase

Python Unittest Test Runner

In the previous section, we created a TestCase subclass named TestAddFishToAquarium. From the same directory as the test_add_fish_to_aquarium.py file, let’s run that test with the following command:

We invoked the Python library module named unittest with python -m unittest. Then, we provided the path to our file containing our TestAddFishToAquariumTestCase as an argument.

After we run this command, we receive output like the following:

The unittest module ran our test and told us that our test ran OK. The single . on the first line of the output represents our passed test.

Note:TestCase recognizes test methods as any method that begins with test. For example, def test_add_fish_to_aquarium_success(self) is recognized as a test and will be run as such. def example_test(self), conversely, would not be recognized as a test because it does not begin with test. Only methods beginning with test will be run and reported when you run python -m unittest ....

Now let’s try a test with a failure.

We modify the following highlighted line in our test method to introduce a failure:

The modified test will fail because add_fish_to_aquarium won’t return 'rabbit' in its list of fish belonging to 'tank_a'. Let’s run the test.

Again, from the same directory as test_add_fish_to_aquarium.py we run:

When we run this command, we receive output like the following:

The failure output indicates that our test failed. The actual output of {'tank_a': ['shark', 'tuna']} did not match the (incorrect) expectation we added to test_add_fish_to_aquarium.py of: {'tank_a': ['rabbit']}. Notice also that instead of a ., the first line of the output now has an F. Whereas . characters are outputted when tests pass, F is the output when unittest runs a test that fails.

Now that we’ve written and run a test, let’s try writing another test for a different behavior of the add_fish_to_aquarium function.

Testing a Function that Raises an Exception

unittest can also help us verify that the add_fish_to_aquarium function raises a ValueError Exception if given too many fish as input. Let’s expand on our earlier example, and add a new test method named test_add_fish_to_aquarium_exception:

The new test method test_add_fish_to_aquarium_exception also invokes the add_fish_to_aquarium function, but it does so with a 25 element long list containing the string 'shark' repeated 25 times.

test_add_fish_to_aquarium_exception uses the with self.assertRaises(...)context manager provided by TestCase to check that add_fish_to_aquarium rejects the inputted list as too long. The first argument to self.assertRaises is the Exception class that we expect to be raised—in this case, ValueError. The self.assertRaises context manager is bound to a variable named exception_context. The exception attribute on exception_context contains the underlying ValueError that add_fish_to_aquarium raised. When we call str() on that ValueError to retrieve its message, it returns the correct exception message we expected.

From the same directory as test_add_fish_to_aquarium.py, let’s run our test:

When we run this command, we receive output like the following:

Notably, our test would have failed if add_fish_to_aquarium either didn’t raise an Exception, or raised a different Exception (for example TypeError instead of ValueError).

Note:unittest.TestCase exposes a number of other methods beyond assertEqual and assertRaises that you can use. The full list of assertion methods can be found in the documentation, but a selection are included here:

| Method | Assertion |

|---|---|

assertEqual(a, b) | a b |

assertNotEqual(a, b) | a != b |

assertTrue(a) | bool(a) is True |

assertFalse(a) | bool(a) is False |

assertIsNone(a) | a is None |

assertIsNotNone(a) | a is not None |

assertIn(a, b) | a in b |

assertNotIn(a, b) | a not in b |

Now that we’ve written some basic tests, let’s see how we can use other tools provided by TestCase to harness whatever code we are testing.

Using the setUp Method to Create Resources

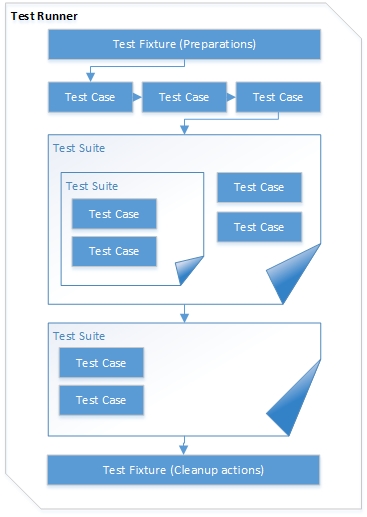

TestCase also supports a setUp method to help you create resources on a per-test basis. setUp methods can be helpful when you have a common set of preparation code that you want to run before each and every one of your tests. setUp lets you put all this preparation code in a single place, instead of repeating it over and over for each individual test.

Let’s take a look at an example:

test_fish_tank.py defines a class named FishTank. FishTank.has_water is initially set to False, but can be set to True by calling FishTank.fill_with_water(). The TestCase subclass TestFishTank defines a method named setUp that instantiates a new FishTank instance and assigns that instance to self.fish_tank.

Since setUp is run before every individual test method, a new FishTank instance is instantiated for both test_fish_tank_empty_by_default and test_fish_tank_can_be_filled. test_fish_tank_empty_by_default verifies that has_water starts off as False. test_fish_tank_can_be_filled verifies that has_water is set to True after calling fill_with_water().

From the same directory as test_fish_tank.py, we can run:

If we run the previous command, we will receive the following output:

The final output shows that the two tests both pass.

setUp allows us to write preparation code that is run for all of our tests in a TestCase subclass.

Note: If you have multiple test files with TestCase subclasses that you’d like to run, consider using python -m unittest discover to run more than one test file. Run python -m unittest discover --help for more information.

Using the tearDown Method to Clean Up Resources

TestCase supports a counterpart to the setUp method named tearDown. tearDown is useful if, for example, we need to clean up connections to a database, or modifications made to a filesystem after each test completes. We’ll review an example that uses tearDown with filesystems:

test_advanced_fish_tank.py defines a class named AdvancedFishTank. AdvancedFishTank creates a file named fish_tank.txt and writes the string 'shark, tuna' to it. AdvancedFishTank also exposes an empty_tank method that removes the fish_tank.txt file. The TestAdvancedFishTankTestCase subclass defines both a setUp and tearDown method.

The setUp method creates an AdvancedFishTank instance and assigns it to self.fish_tank. The tearDown method calls the empty_tank method on self.fish_tank: this ensures that the fish_tank.txt file is removed after each test method runs. This way, each test starts with a clean slate. The test_fish_tank_writes_file method verifies that the default contents of 'shark, tuna' are written to the fish_tank.txt file.

From the same directory as test_advanced_fish_tank.py let’s run:

We will receive the following output:

tearDown allows you to write cleanup code that is run for all of your tests in a TestCase subclass.

Conclusion

In this tutorial, you have written TestCase classes with different assertions, used the setUp and tearDown methods, and run your tests from the command line.

The unittest module exposes additional classes and utilities that you did not cover in this tutorial. Now that you have a baseline, you can use the unittest module’s documentation to learn more about other available classes and utilities. You may also be interested in How To Add Unit Testing to Your Django Project.

Jenkins shared library is a powerful way for sharing Groovy code between multiple Jenkins pipelines.However, when many Jenkins pipelines, including mission-critical deployment pipelines, depend on such shared libraries, automated testing becomes necessary to prevent regressions whenever new changes are introduced into shared librariers.Despite its drawbacks, the third-party Pipeline Unit Testing framework satisfies some of automated testing needs.It would allow you to do mock execution of pipeline steps and checking for expected behaviors before actually running in Jenkins.However, documentation for this third-party framework is severely lacking (mentioned briefly here) and it is one of many reasons that unit testing for Jenkins shared libraries is usually an after-thought, instead of being integrated early.In this blog post, we will see how to do unit testing for Jenkins shared library with the Pipeline Unit Testing framework.

Python Unittest Run One Test

Testing Jenkins shared library

Example Groovy file

For this tutorial, we look at the following Groovy build wrapper as the example under test:

After the shared library is set up properly, you can call the above Groovy build wrapper in Jenkinsfile as follows to use default parameters:

or you can set the parameters in the wrapper’s body as follows:

In the next section, we will look into automated testing of both use cases using JenkinsPipelineUnit.

Using JenkinsPipelineUnit

To use JenkinsPipelineUnit, it is recommended to set up IntelliJ following this tutorial.

To test the above buildWrapper.groovy using the Jenkins Pipeline Unit, you can start with a unit test for the second use case as follows:

Unfortunately, when executing that unit test, it is very likely that you will get various errors that are not well-explained by JenkinsPipelineUnit documentation.

The short explanation is that the mock execution environment is not properly set up.First, we need to call setUp() from the base class BaseRegressionTest of JenkinsPipelineUnit to set up the mock execution environment.In addition, since most Groovy scripts will have this statement checkout scm, we need to mock the Jenkins global variablescm, which represents the SCM state (e.g., Git commit) associated with the current Jenkinsfile.The most simple way to mock it is to set it to empty state as follows:

We can also set it to a more meaningful value such as a Git branch as follows:

However, an empty scm will usually suffice.Besides Jenkins variables, we can also register different Jenkins steps/commands as follows:

After going through the setup steps above, you should have the following setup method like this:

Rerunning the above unit test will show the full stack of execution:

For automated detection of regression, we need to save the expected call stack above into a file into a location known to JenkinsPipelineUnit.You can specify the location of such call stacks by overriding the field callStackPath of BaseRegressionTest in setUp method.The file name should follow the convention ${ClassName}_${subname}.txt where subname is specified by testNonRegression method in each test case.Then, you can update the above test case to perform regression check as follows:

In this example, the above call stack should be saved into DemoTest_configured.txt file at the location specified by callStackPath.Similarly, you can also have another unit test for the other use case of buildWrapper.

Any change in buildWrapper.groovy will be detected as test failures, as shown in the screen shot below.

In IntelliJ, we can click on Click to see difference link to compare the actual call stack versus the expected one that was saved in the text file.

This test class shows a complete example, together with files of expected call stacks.

Python Unittest Test Runner

Other usage

Python Unittest Run Specific Test

You can also use PipelineUnitTests to test Jenkinsfile.In most cases, testing Jenkinsfile will be similar to testing Groovy files in vars folder, as explained above, since they are quite similar.

The process is very similar: you need to mock out some global variables and functions corresponding to Jenkins pipeline steps.You will need to printCallStack to obtain the expected output and save it into some text file.Then, you can use testNonRegression for automated verification of no-regression in Jenkinsfile.This test class shows an example of testing Jenkinsfile using PipelineUnitTests.

Note that, unlike Groovy files in vars folder, Jenkinsfiles are regularly updated and usually NOT depended/used by any other codes.Therefore, automated tests for Jenkinsfile are not very common because of the cost/effort required.